Why JMH?

This guide uses JMH (Java Microbenchmark Harness), the standard benchmarking framework for the JVM. JMH is developed as part of the OpenJDK project by the same engineers who build the JVM itself, so it understands JVM internals like JIT compilation, dead code elimination, and constant folding that can silently invalidate naive benchmarks. It handles warmup, fork isolation, and statistical analysis out of the box so you can focus on writing the code you want to measure.Your First Benchmark

Let’s start with the simplest possible JMH benchmark: a single method that measures how fast a recursive Fibonacci function runs.Project Setup

- Maven

- Gradle

The recommended way to use JMH with Maven is through its archetype, which

generates a project pre-configured with the annotation processor and

uber-JAR packaging:This creates a The generated

terminal

my-benchmarks/ directory with the following structure:my-benchmarks

pom.xml

src/main/java/com/example

MyBenchmark.java

pom.xml includes jmh-core (the runtime library),

jmh-generator-annprocess (the annotation processor that generates

benchmark harness code at compile time), and maven-shade-plugin (packages

everything into a single executable benchmarks.jar).Writing the Benchmark

The archetype generates a stubMyBenchmark.java with an empty @Benchmark

method. Open src/main/java/com/example/MyBenchmark.java and replace its

contents with:

src/main/java/com/example/MyBenchmark.java

@Benchmark is the only annotation you need. JMH generates the

measurement harness around it. The method returns its result, which prevents

the JVM from eliminating the computation as dead code (more on this in

avoiding common pitfalls).

Building and Running

Build the uber-JAR and run the benchmark:- Maven

- Gradle

terminal

terminal

thrpt), measured in operations per second.

Configuring Your Benchmark

The previous benchmark used all JMH defaults. In practice, you want to embed settings into your benchmark class using annotations. This makes benchmarks self-describing and reproducible regardless of how they are invoked. UpdateMyBenchmark.java:

src/main/java/com/example/MyBenchmark.java

- Maven

- Gradle

terminal

terminal

avgt (average time) in ms/op. A single fork completed

in seconds instead of minutes. Computing fibonacci(30) takes about 3.1

milliseconds.

The following sections break down each annotation.

Benchmark Mode

@BenchmarkMode controls what JMH measures. It can be placed on a class

(applies to all methods) or on individual methods.

Measures operations per second. Use this to quantify system capacity and

compare throughput across implementations.

Measures average time per operation. The general-purpose choice for latency

benchmarking when you care about typical performance.

Samples individual operation times and reports percentiles (p50, p90, p99,

p99.9). Use this to understand tail latency, not just the average.

Particularly useful because it reports percentiles directly:This reveals that while the median latency is 38ns, the p99.99 is 3.2

microseconds, an 84x spike. Percentile data like this is invaluable for

understanding real-world latency characteristics.

terminal

Measures the time for a single invocation with no warmup. Use this to

benchmark cold-start performance and one-shot initialization costs.

State and Scope

@State marks a class as a holder for benchmark data. Without it, you cannot

use instance fields in benchmark methods. The Scope parameter controls how

state is shared:

Creates one state instance per thread with no sharing between threads. The

default choice for most benchmarks.

Shares one state instance across all threads. Use this when measuring

contention and thread-safety overhead.

Shares one state instance per thread group. Use this for asymmetric benchmarks

(e.g., producer/consumer patterns).

Separate state class

Fork, Warmup, Measurement, and Output Unit

These annotations control the execution strategy and output formatting:Controls how many separate JVM processes to run. Forks run sequentially,

not in parallel. Each fork starts a fresh JVM, isolating profile-guided

optimizations and JIT compilation state. Use

jvmArgs to control heap size,

GC settings, and other JVM flags. Use jvmArgsPrepend or jvmArgsAppend to

add flags without replacing defaults.Controls how many iterations run before measurement begins, giving the JIT

compiler time to optimize your code to steady state. Parameters:

iterations,

time, timeUnit.Controls how many iterations are recorded and included in the results. Accepts

the same parameters as

@Warmup: iterations, time, timeUnit.Controls the time unit displayed in results. Accepts any

java.util.concurrent.TimeUnit value (e.g., TimeUnit.NANOSECONDS,

TimeUnit.MILLISECONDS).Configuration examples

Threads

@Threads controls how many threads run the benchmark concurrently. The

default is 1. Combined with Scope.Benchmark, this is

how you measure contention:

Multi-threaded benchmark

Benchmarking with Parameters

The previous examples all used a single input value (30). But what if you want to see how performance changes with different input sizes? This is where@Param comes in.

Single Parameter

Add a new benchmark class to test multiple input sizes:src/main/java/com/example/FibonacciParameterized.java

@Param tells JMH to run the benchmark once for each value. Rebuild and run:

- Maven

- Gradle

terminal

terminal

Multiple Parameters

Each@Param annotation applies to a single field, but you can use multiple

@Param fields to benchmark across several dimensions. JMH runs all

combinations automatically:

Multiple @Param fields

1000/ArrayList, 1000/LinkedList,

10000/ArrayList, 10000/LinkedList.

Comparing Algorithms

Parameters are powerful for comparing different implementations side-by-side. Let’s benchmark recursive vs. iterative Fibonacci:src/main/java/com/example/AlgorithmComparison.java

terminal

fibonacci(30) in 6 nanoseconds while the

recursive version takes 3.1 milliseconds: over 500,000x faster. This is the

power of parameterized benchmarks: they make algorithmic trade-offs visible at a

glance.

Benchmarking Only What Matters

Sometimes you have expensive setup that should not be included in your benchmark measurements. For example, generating test data or loading files. JMH provides@Setup and @TearDown annotations with different Level options to control

when fixture methods run.

Setup and Teardown

Let’s benchmark an outlier detection algorithm where the dataset generation is expensive but should not be measured:src/main/java/com/example/OutlierDetection.java

@Setup(Level.Trial) method runs once before all measurement iterations.

Only the findOutliers() method is timed:

terminal

Fixture Levels

JMH offers three levels for@Setup and @TearDown:

Runs once per benchmark fork. Use this for loading files and building large

datasets that are reused across all iterations.

Runs before and after each measurement iteration. Use this to reset mutable

state between iterations.

Runs before and after each individual method call. Use sparingly - this adds

overhead on every invocation.

Level.Iteration to provide fresh unsorted data for

each iteration of a sorting benchmark:

src/main/java/com/example/SortBenchmark.java

Running Benchmarks from the Command Line

Thebenchmarks.jar supports a rich set of command-line options. Here are the

most useful ones:

Filtering Benchmarks

Run only benchmarks matching a regex:terminal

terminal

Overriding Parameters

Override@Param, @Fork,

@Warmup, and @Measurement from the

command line:

terminal

Number of forks.

Number of threads.

Warmup iterations.

Measurement iterations.

Warmup iteration time (e.g.,

2s).Measurement iteration time.

Override

@Param values.Override benchmark mode (

thrpt, avgt, sample, ss).Override time unit (

ns, us, ms, s).Exporting Results

JMH can export results in various formats for further analysis or visualization:Result format. One of

text, csv, scsv, json, latex.Result file path. Where to write the output (e.g.,

results.json).terminal

Using Profilers

JMH ships with built-in profilers. List them with:terminal

Samples hot methods and thread states to show where time is being spent.

Reports allocation rate, GC pressure, and bytes allocated per operation.

Reports JIT compilation activity during the measurement window.

Reports per-operation hardware counters: cache misses, branch mispredictions,

and CPI. Linux only.

Generates CPU flamegraphs using

async-profiler.

terminal

gc.alloc.rate.norm) and GC event counts, essential for understanding

allocation-heavy code.

Avoiding Common Pitfalls

The JVM is a sophisticated optimizing runtime. Without care, it can silently eliminate or transform the code you are trying to measure, producing misleading results. JMH is designed to help, but you still need to follow certain patterns.Dead Code Elimination

If a computation’s result is never used, the JIT compiler may eliminate it entirely:Dead code elimination

@Benchmark methods through an

internal Blackhole, preventing elimination. Always return your computed

result.

Blackholes for Multiple Results

When you produce multiple results, you can only return one. UseBlackhole.consume() for the rest:

Blackhole usage

Blackhole from org.openjdk.jmh.infra.Blackhole. JMH injects it

automatically as a method parameter.

Constant Folding

If the JVM can determine a computation’s inputs at compile time, it folds the entire computation into a constant:Constant folding

Your IDE may suggest making

x final. Do not. Non-final @State fields are

essential for preventing constant folding in benchmarks.Do Not Loop Manually

Never write manual loops inside benchmark methods. The JVM aggressively optimizes loops. It unrolls, pipelines, and hoists invariant computations out of them, producing unrealistically low per-operation numbers:Manual loops

Best Practices

Use Multiple Forks

The JVM is non-deterministic. Profile-guided optimizations, garbage collection, and thread scheduling vary between runs. A single fork can give misleading results. Use multiple forks (see@Fork) to capture this

variance:

Fork configuration

Keep Benchmarks Deterministic

Use fixed seeds in your@Setup methods for random number

generators:

Deterministic setup

Verify Correctness Alongside Performance

Include assertions in your setup or dedicated test methods to ensure you are benchmarking correct code, not broken code that happens to be fast:Correctness check

Use Realistic Data

Sorted or regular data can exploit hardware optimizations like branch prediction and cache prefetching, giving misleadingly good results. Use representative data that matches your production workload.Benchmark Your Own Code

In real projects, organize your benchmarks alongside your source code:my-project

pom.xml

src

main/java/com/example

MyAlgorithm.java

benchmarks

pom.xml

src/main/java/com/example

MyAlgorithmBenchmark.java

Running Benchmarks Continuously with CodSpeed

So far, you’ve been running benchmarks locally. But local benchmarking has limitations:- Inconsistent hardware: Different developers get different results

- Manual process: Easy to forget to run benchmarks before merging

- No historical tracking: Hard to spot gradual performance degradation

- No PR context: Can’t see performance impact during code review

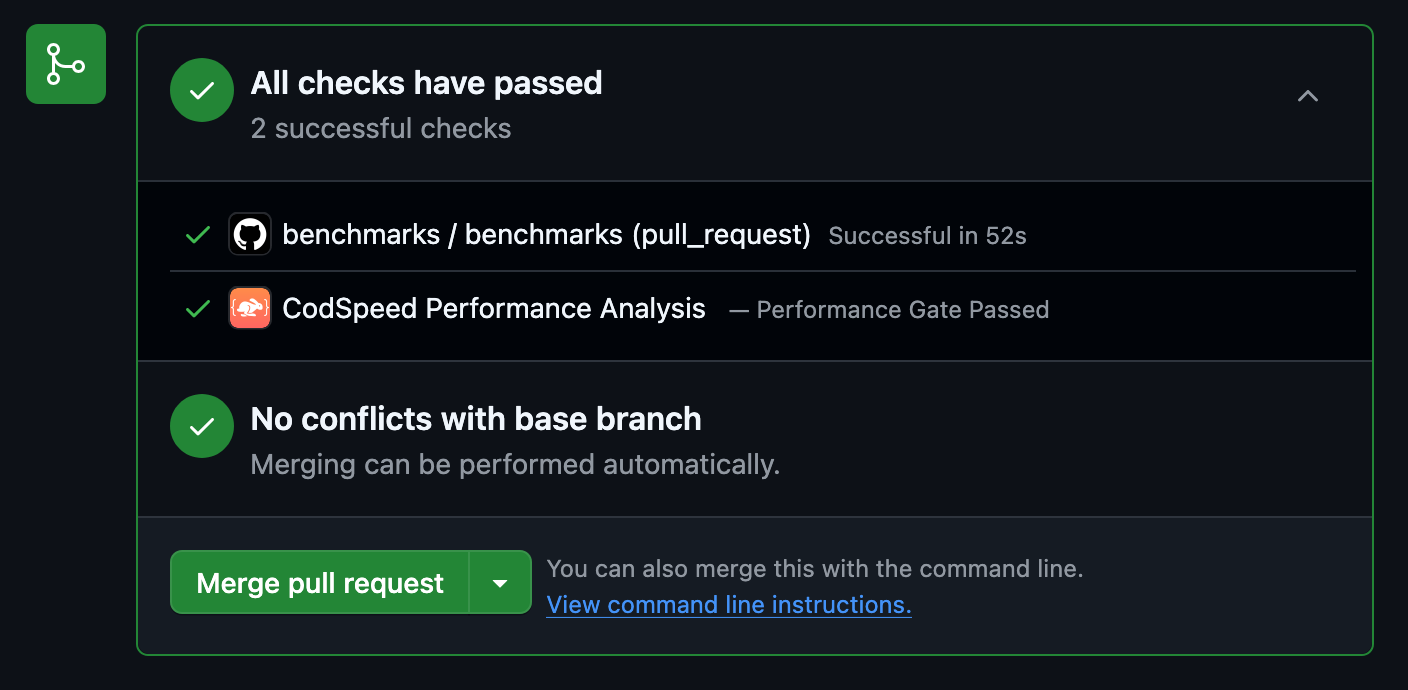

- Automated performance regression detection in PRs

- Consistent metrics with reliable measurements across all runs

- Historical tracking to see performance over time with detailed charts

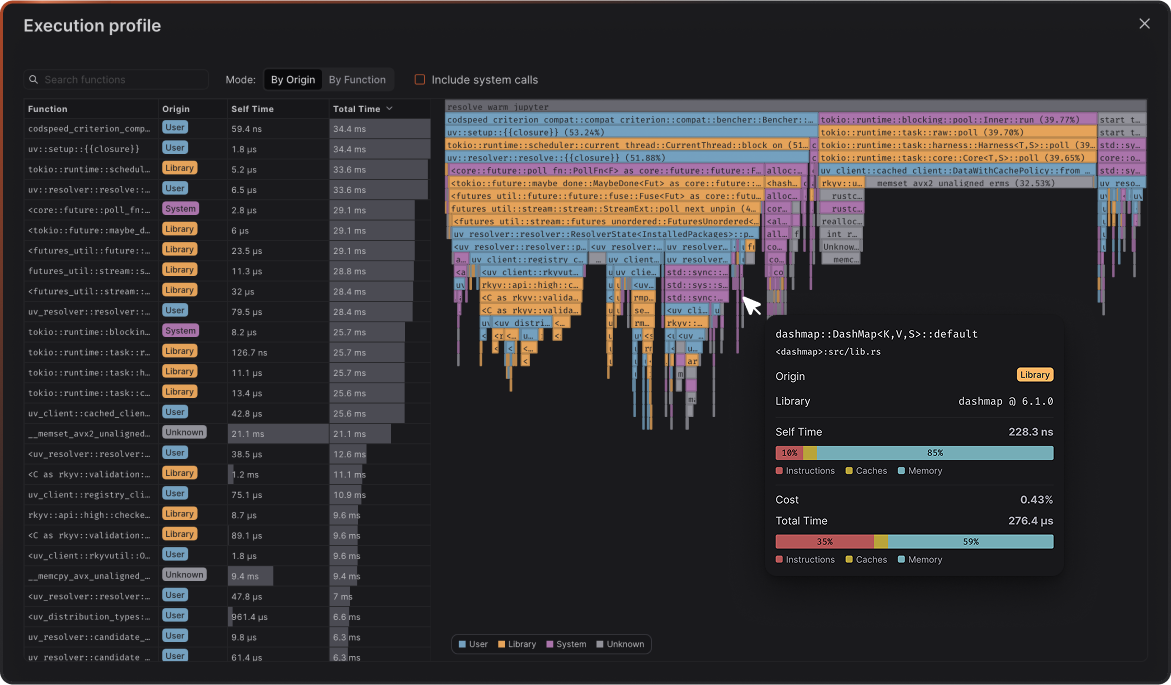

- Flamegraph profiles to see exactly what changed in your code’s execution

How to Set Up CodSpeed

Here’s how to integrate CodSpeed with your JMH benchmarks:CodSpeed integrates with JMH through a custom fork. Before configuring CI,

follow the Java integration reference to add the fork as a

Maven or Gradle dependency.

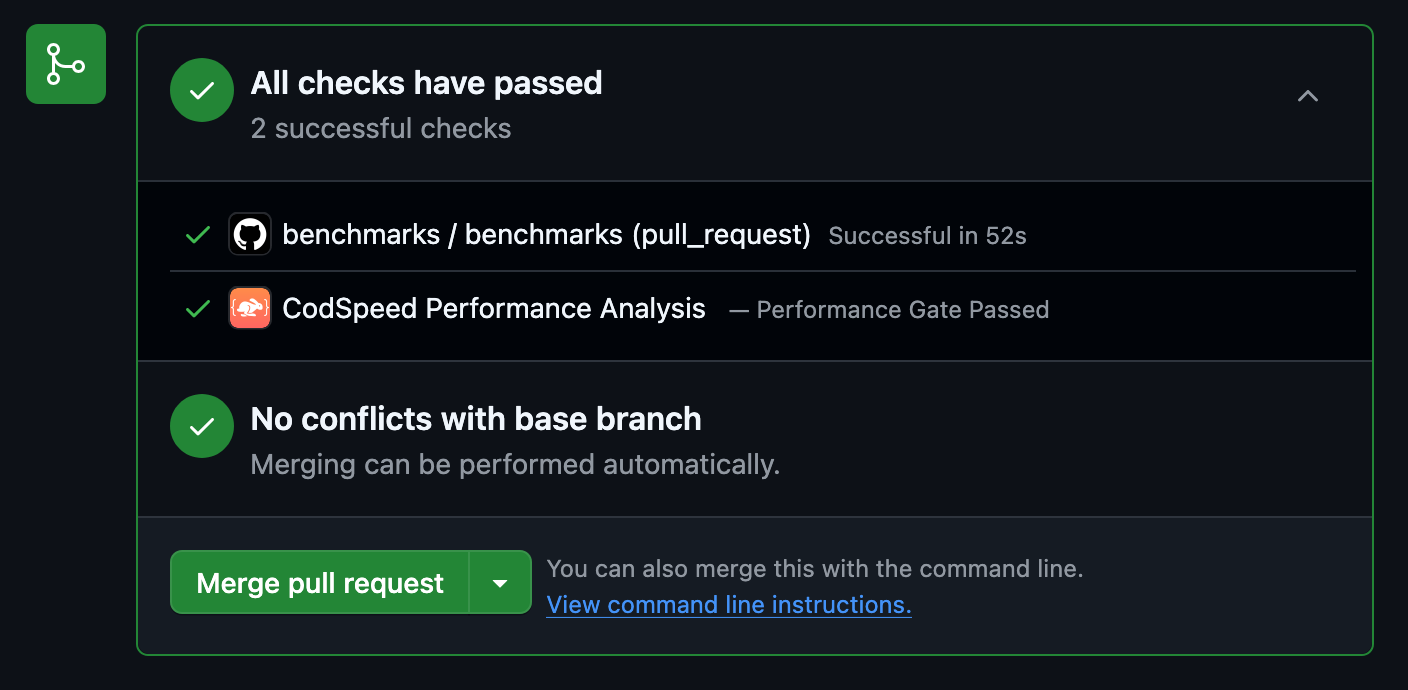

Check the Results

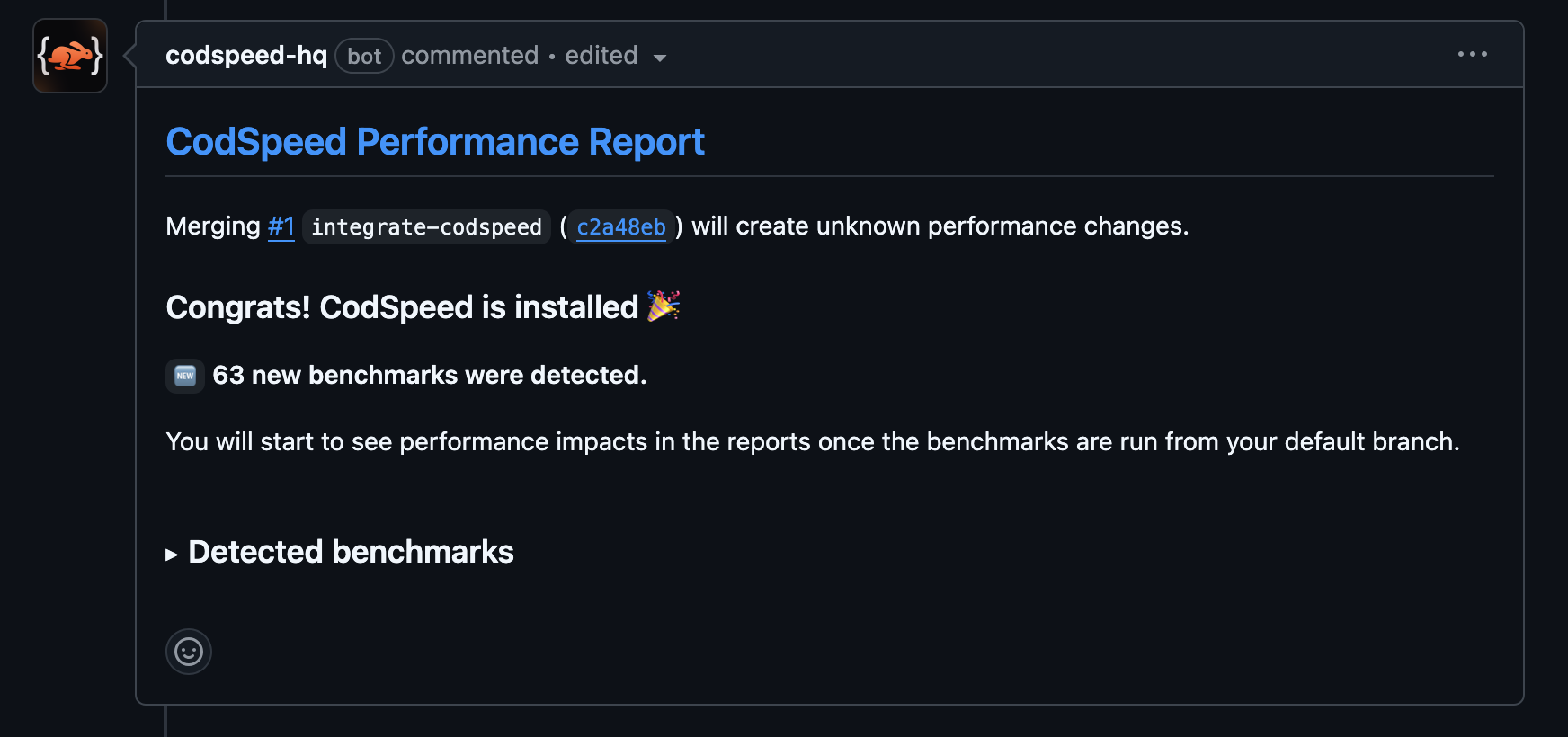

Once the workflow runs, your pull requests will receive a performance report

comment:

Next Steps

Check out these resources to continue your Java benchmarking journey:Get Started with CodSpeed

Sign up and start tracking your Java performance in CI

Java Integration Reference

Set up the CodSpeed JMH fork in your Maven or Gradle project

Performance Profiling

Learn how to use flamegraphs to optimize your code

JMH Source Code

Dive into the JMH source and annotation reference