Why the testing Package?

Go has first-class benchmarking built into its standard library — no external

framework needed. The testing package provides testing.B, which handles

iteration control, timing, memory tracking, sub-benchmarks, and parallel

execution out of the box. Every Go developer already has the tools installed.

Benchmarks live in _test.go files alongside your code, run with go test, and

integrate with the Go ecosystem’s profiling tools (pprof, benchstat).

Your First Benchmark

Let’s start with the simplest possible Go benchmark: measuring a recursive Fibonacci function.Setting Up

Create a module and two files — the function and its benchmark:terminal

fib.go

fib_test.go

- Benchmark functions must start with

Benchmarkand accept*testing.B. b.Loop()(Go 1.24+) controls iteration. Iteration count and timing are handled automatically.- The function lives in a

_test.gofile, just like unit tests.

b.Loop() was introduced in Go 1.24. It replaces the older

for i := 0; i < b.N; i++ pattern and is more precise — it automatically resets

the timer, and the compiler is prevented from optimizing away the loop body. If

you are on an older Go version, use the b.N pattern instead:Legacy pattern (before Go 1.24)

Running the benchmarks

terminal

-8 suffix on BenchmarkFibonacci-8 is the

GOMAXPROCS value, defaulting to the

number of available CPUs.

Configuring Your Benchmarks

Benchmark Duration

Control how long each benchmark runs with-benchtime:

terminal

terminal

Memory Allocation Tracking

Add-benchmem to report allocation stats, or call b.ReportAllocs() inside

the benchmark:

terminal

terminal

Filtering and Skipping Tests

Run only benchmarks (skip unit tests) with a regex:terminal

terminal

terminal

Key CLI Flags

Run benchmarks matching the regular expression. Use

-bench=. for all.Minimum time per benchmark. Accepts a duration (

5s, 100ms) or an exact

iteration count (1000x).Report memory allocation statistics (

B/op, allocs/op).Run each benchmark n times. Use

-count=10 or higher for statistical analysis

with benchstat.Comma-separated

GOMAXPROCS values to test with (e.g., -cpu=1,2,4,8).Filter tests. Use

-run='^$' to skip unit tests when benchmarking.Maximum total time for all tests and benchmarks.

Sub-benchmarks and Table-Driven Patterns

Sub-benchmarks with b.Run

Use b.Run() to create sub-benchmarks — the standard way to test different

inputs or configurations:

fib_test.go

terminal

terminal

Comparing Algorithms

Sub-benchmarks make algorithm comparison straightforward:fib_test.go

terminal

Benchmarking Only What Matters

Excluding Setup with b.ResetTimer

When your benchmark has expensive one-time setup, use b.ResetTimer() to

exclude it from measurements:

Excluding setup

With

b.Loop() (Go 1.24+), the timer is automatically reset on the first

iteration, so b.ResetTimer() is no longer required unless the setup happens

inside the loop body.Per-Iteration Setup with Timer Control

When each iteration needs fresh data (e.g., sorting an unsorted slice), useb.StopTimer() and b.StartTimer():

sort_test.go

terminal

Custom Metrics

Report domain-specific metrics withb.ReportMetric():

Custom metrics

b.SetBytes(n) to report throughput in MB/s for I/O-bound benchmarks:

Throughput reporting

Parallel Benchmarks

Useb.RunParallel() to benchmark code under concurrent load. This creates

GOMAXPROCS goroutines and distributes iterations among them:

fib_test.go

terminal

b.SetParallelism(n) to increase concurrency beyond GOMAXPROCS for

I/O-bound workloads:

High concurrency

Avoiding Common Pitfalls

Compiler Dead Code Elimination

If a computation’s result is unused, the Go compiler may eliminate it entirely. This is the single most common source of misleading benchmark results.Dead code elimination

b.N pattern (Go < 1.24), pass the result to

runtime.KeepAlive so the compiler treats it as observed:

runtime.KeepAlive pattern

Do Not Use b.N as Input

Using b.N as a function parameter means the workload grows with the iteration

count. The benchmark never converges and reports meaningless numbers:

b.N misuse

Keep Benchmarks Deterministic

Use fixed seeds for random data:Deterministic setup

Comparing Results with benchstat

Raw benchmark numbers are noisy. Use

benchstat to compare

results with statistical rigor.

Installation

terminal

Workflow

Run benchmarks multiple times (at least 10) to collect enough samples:terminal

terminal

benchstat:

terminal

- ± 0%: the 95% confidence interval. Lower means more stable results.

- ~ (p=0.841): no statistically significant difference. The p-value is from a Mann-Whitney U-test; values below 0.05 indicate a real change.

- n=10: the sample count. Use

-count=10or higher for reliable results.

Profiling with pprof

Go benchmarks integrate directly with the pprof profiler. Generate profiles

while benchmarking:

Write CPU profile. Shows where time is spent.

Write memory allocation profile. Shows where allocations happen.

Write goroutine blocking profile. Shows where goroutines wait.

Write mutex contention profile. Shows lock contention hotspots.

terminal

Collect only one profile type at a time for accuracy — profiling itself has

overhead that can distort other measurements.

Best Practices

Run on Idle Machines

Close background processes, avoid running on battery, and disable CPU throttling when collecting benchmark data. Noise from other processes can mask real performance changes.Use -count and benchstat for Decisions

Never eyeball raw ns/op numbers to decide if a change helped. Run with

-count=10 and use benchstat to test for statistical significance. With ~20

benchmarks at alpha=0.05, expect ~1 false positive.

Track Memory Alongside Latency

Always use-benchmem or b.ReportAllocs(). Even if latency stays flat,

increased allocations put pressure on the garbage collector and cause latency

spikes under production load.

Use Sub-Benchmarks for Input Variation

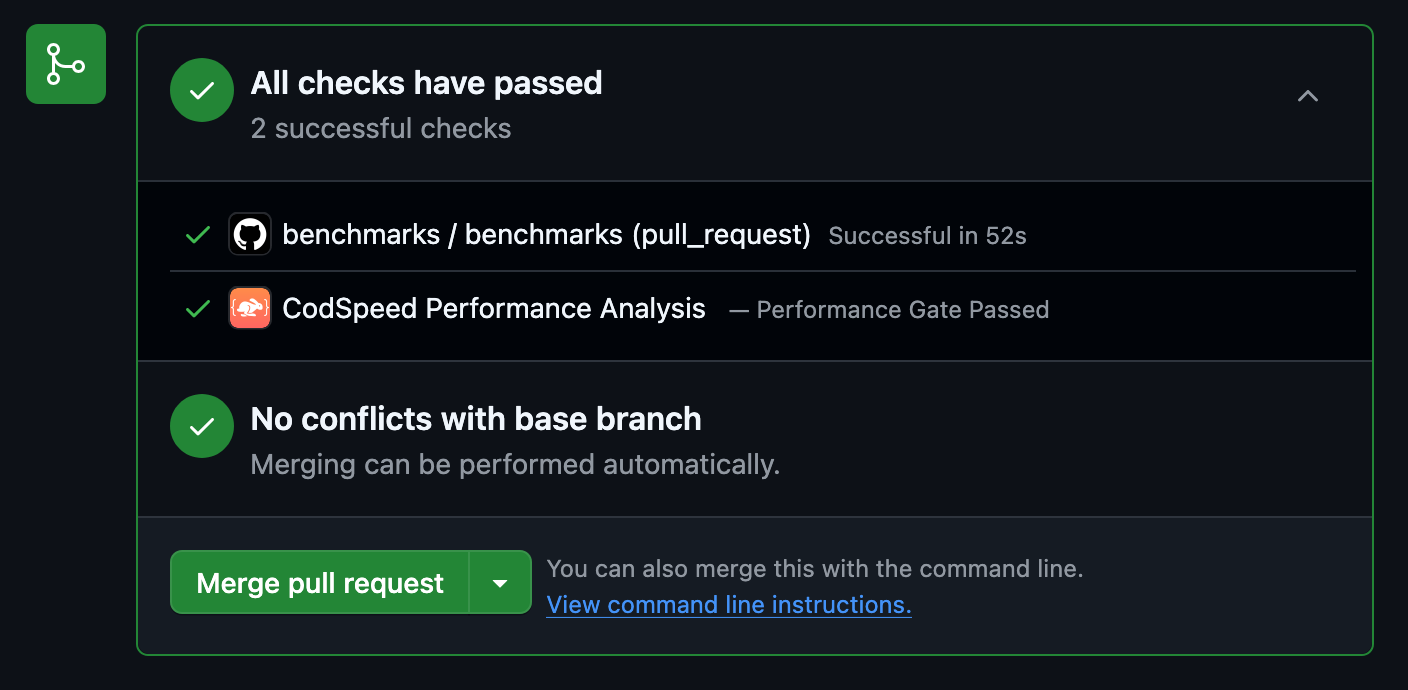

Table-driven sub-benchmarks let you test across input sizes, data shapes, and configurations in a single benchmark function. They also enable filtering from the command line.Running Benchmarks Continuously with CodSpeed

So far, you’ve been running benchmarks locally. But local benchmarking has limitations:- Inconsistent hardware: Different developers get different results

- Manual process: Easy to forget to run benchmarks before merging

- No historical tracking: Hard to spot gradual performance degradation

- No PR context: Can’t see performance impact during code review

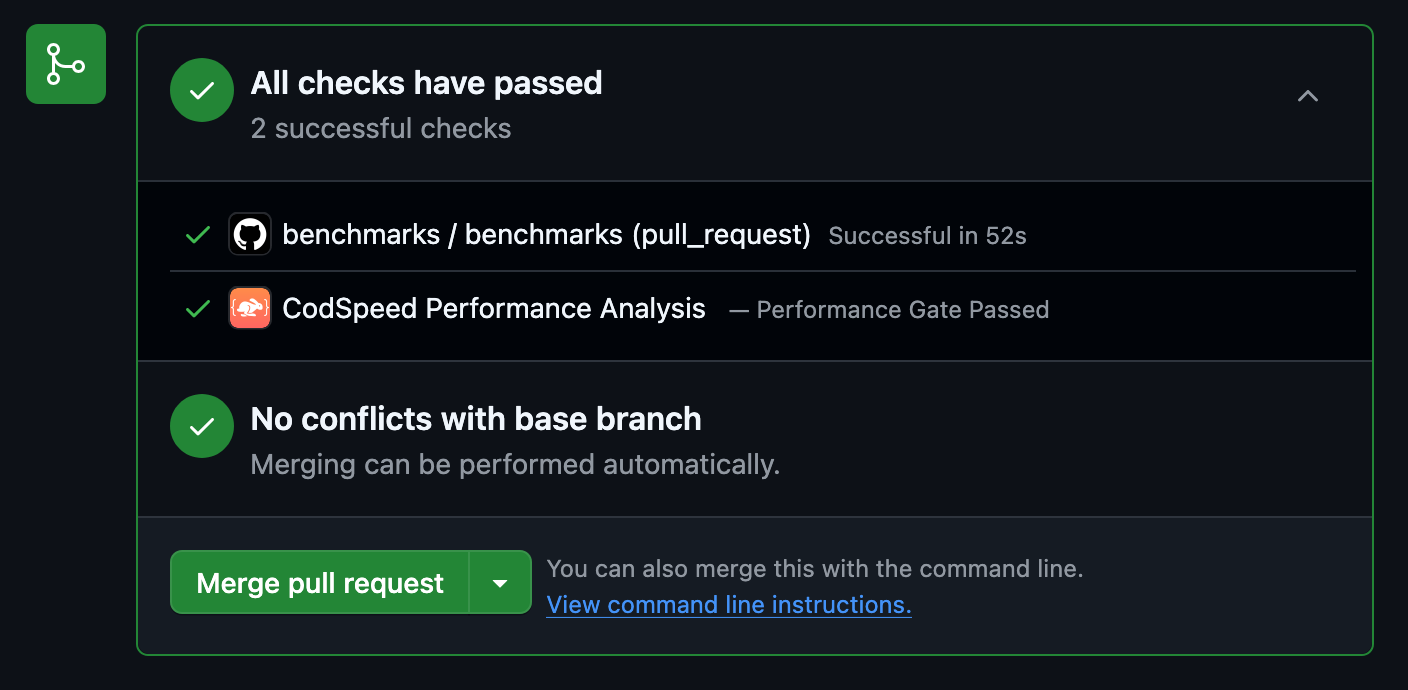

- Automated performance regression detection in PRs

- Consistent metrics with reliable measurements across all runs

- Historical tracking to see performance over time with detailed charts

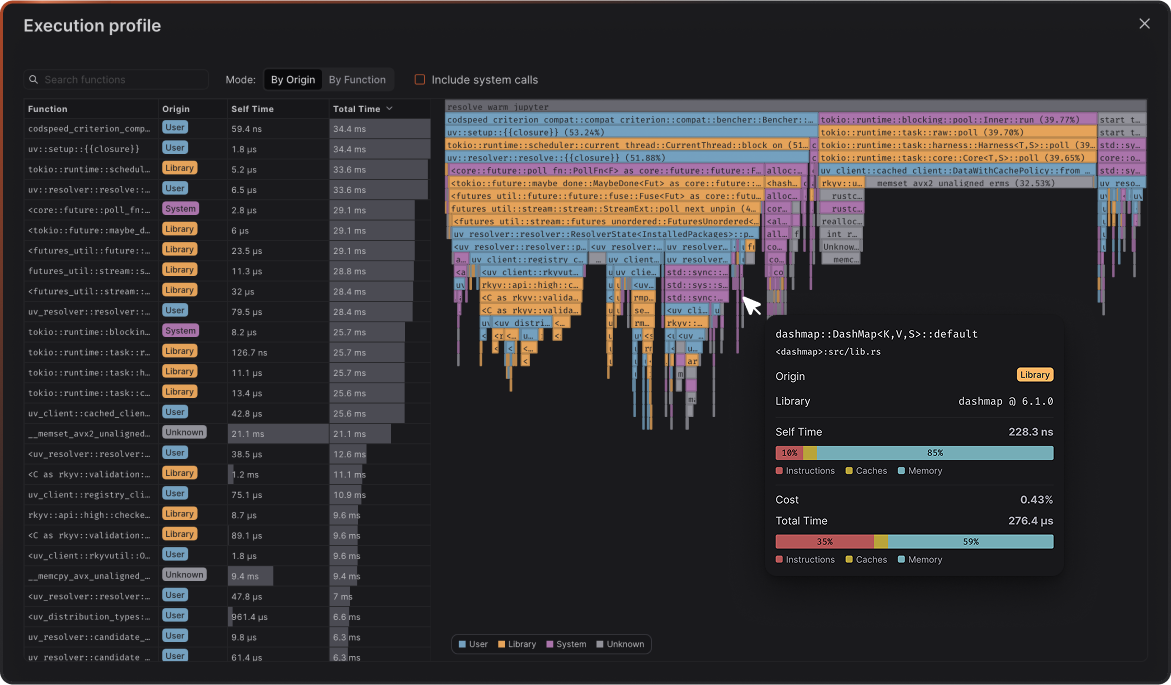

- Flamegraph profiles to see exactly what changed in your code’s execution

How to Set Up CodSpeed

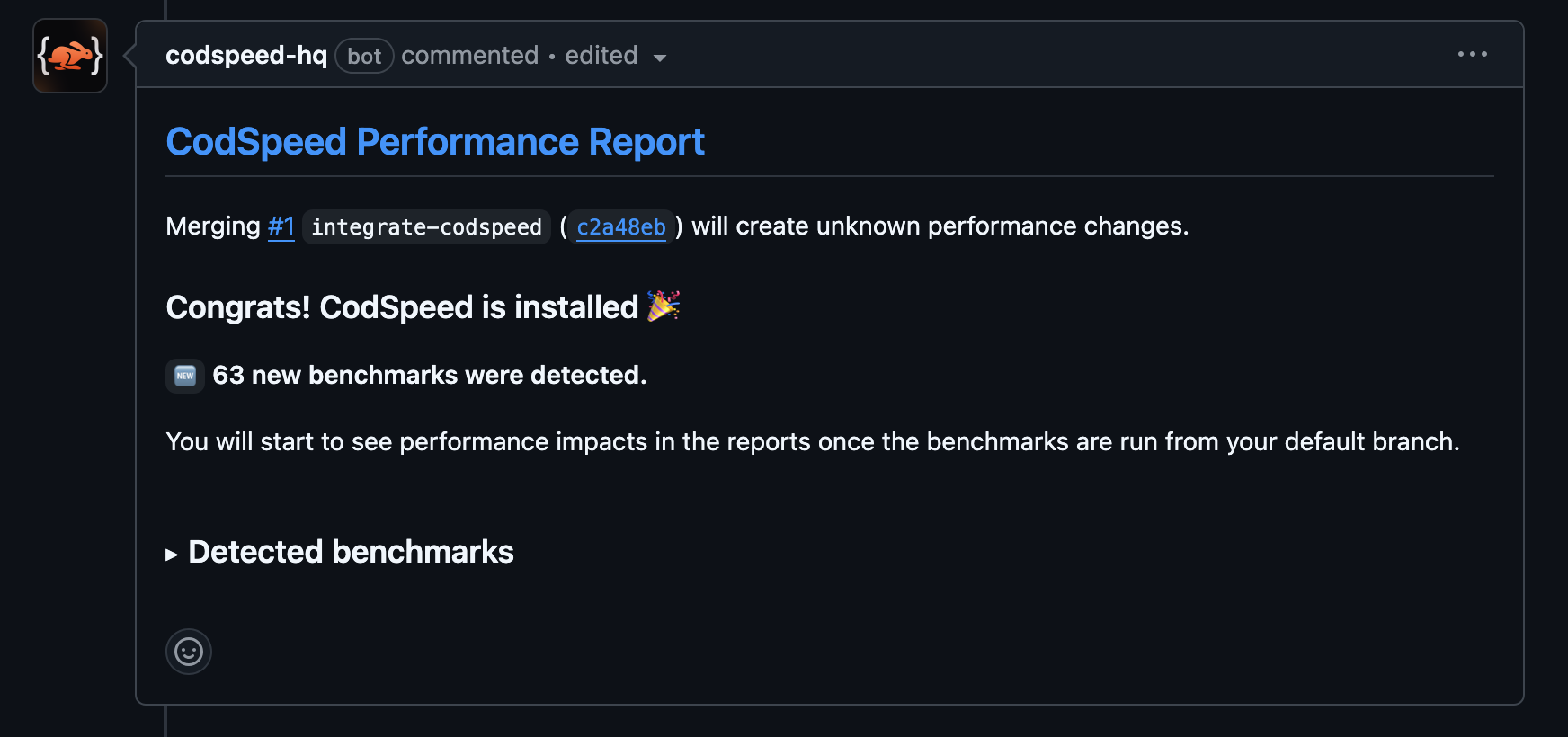

Here’s how to integrate CodSpeed with your Go benchmarks:Check the Results

Once the workflow runs, your pull requests will receive a performance report

comment:

Next Steps

Check out these resources to continue your Go benchmarking journey:Get Started with CodSpeed

Sign up and start tracking your Go performance in CI

CodSpeed Go Benchmarking Docs

CodSpeed’s Go integration reference and compatibility notes

Benchmarking a Go Gin API

A hands-on guide to benchmarking a real HTTP API with Gin

Performance Profiling

Learn how to use flamegraphs to optimize your code